Meta’s Llama 4 release over a weekend has stirred the AI community, presenting two new models that push boundaries in scale and capability. For the OpenClaw ecosystem, this development signals both opportunity and challenge, as local-first AI assistants must navigate hardware constraints while leveraging these advancements for agent automation and plugin ecosystems.

Llama 4 Maverick stands as a 400 billion parameter model with 128 experts and 17 billion active parameters. It accepts text and image inputs, boasting a 1 million token context length. Llama 4 Scout, at 109 billion total parameters with 16 experts and 17 billion active, also supports multi-modal inputs and claims an industry-first 10 million token context. Meta has also teased Llama 4 Behemoth, an unreleased model with 288 billion active parameters and 16 experts, described as their most powerful yet, with 2 trillion total parameters used to train both Scout and Maverick. A reasoning model remains forthcoming, hinted at on a coming soon page with a looping video of an academic-looking llama.

On the LM Arena leaderboard, Llama 4 Maverick currently holds second place, trailing just behind Gemini 2.5 Pro. However, it’s noted that this ranking might not reflect the exact released version, as Meta’s announcement specifies an experimental chat version scoring an ELO of 1417. Users can test these models via OpenRouter’s chat interface or API, which routes through providers like Groq, Fireworks, and Together. Scout’s promised 10 million token input faces practical limits, with current providers capping at 128,000 or 328,000 tokens, while Maverick’s 1 million token support sees Fireworks offering 1.05 million and Together 524,000. Groq does not yet offer Maverick.

Meta’s build_with_llama_4 notebook sheds light on the difficulty of achieving 10 million tokens, noting that on 8xH100 hardware in bf16, Scout can handle up to 1.4 million tokens. Jeremy Howard points out that both models are giant Mixture of Experts architectures unsuitable for consumer GPUs, even with quantization. He suggests Macs could be a viable platform due to their ample memory, where lower compute performance matters less since MoE models activate fewer parameters. Quantization reveals stark requirements: a 4-bit quantized version of the 109B model exceeds the capacity of a single or even dual RTX 4090 setups.

Ivan Fioravanti reports performance metrics from running Llama-4 Scout on MLX with an M3 Ultra Mac: 3-bit quantization yields 52.924 tokens per second using 47.261 GB RAM, 4-bit uses 46.942 tokens per second with 60.732 GB, 6-bit at 36.260 tokens per second with 87.729 GB, 8-bit at 30.353 tokens per second with 114.617 GB, and fp16 at 11.670 tokens per second with 215.848 GB. RAM needs escalate from 64 GB for 3-bit to 256 GB for fp16, underscoring the hardware demands for local deployment within the OpenClaw framework.

The model card includes a suggested system prompt with notable directives: it instructs the model to avoid lecturing on inclusivity, allows writing in specified voices or perspectives, permits rudeness when prompted, and prohibits phrases implying moral superiority like “it’s important to” or “remember.” It also does not refuse political prompts, enabling users to express opinions. Such prompts often hint at behavioral adjustments post-training, relevant for OpenClaw users fine-tuning local agents.

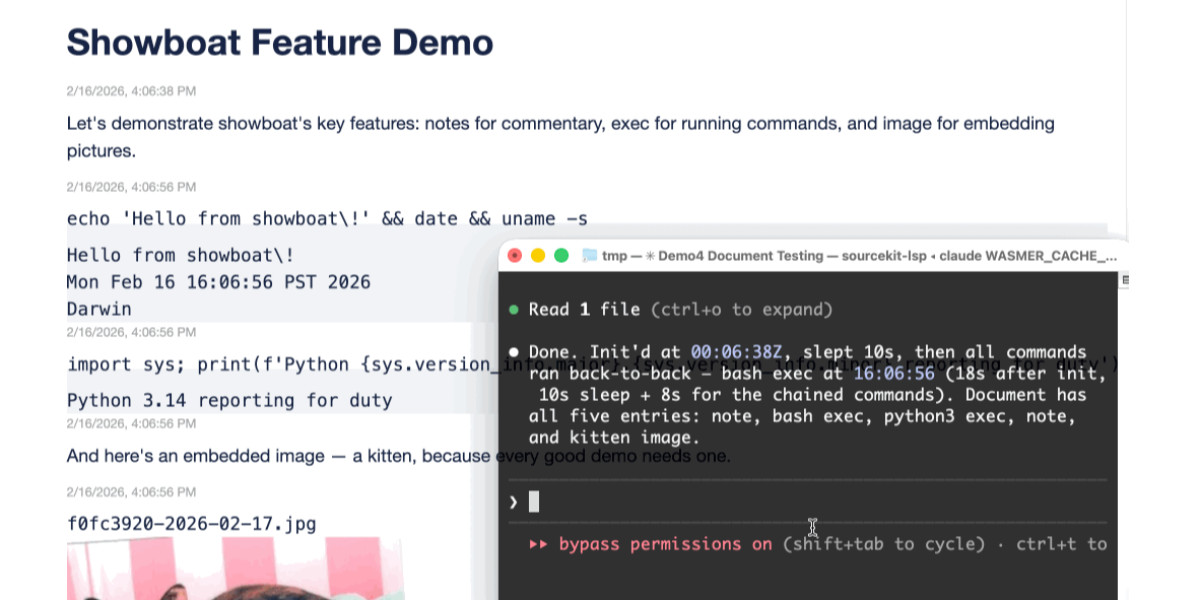

To experiment with these models using LLM, one can install the llm-openrouter plugin, set an OpenRouter key, and run commands like llm -m openrouter/meta-llama/llama-4-maverick hi. Testing long-context capabilities involved summarizing a Hacker News discussion about Llama 4 using a script that wraps LLM. Llama 4 Maverick produced a reasonable summary, starting with themes on release and availability, though output quality varied. Llama 4 Scout via OpenRouter returned garbled results, possibly due to provider issues, as noted by Meta AI’s Ahmed Al-Dahle, who acknowledged mixed quality across services and expected improvements over days.

Subsequent attempts with the llm-groq plugin faced a 2048 token output limit, yielding a brief 630-token summary. In comparison, Gemini 2.5 Pro generated 5,584 output tokens with additional thinking tokens. These early results caution against hasty judgments, as provider configurations may not yet optimize for long-context prompts, a consideration for OpenClaw’s integration strategies.

Hopes for Llama 4 mirror the evolution of Llama 3, which debuted with 8B and 70B models, followed by 3.1 versions up to 405B, and later 3.2 models including 1B and 3B for mobile devices and 11B and 90B with vision support. Llama 3.3 introduced a 70B model comparable to earlier 405B performance. Llama 4’s current 109B and 400B models, aided by the unreleased 2T Behemoth, suggest a potential family of varied sizes. Anticipation builds for a ~3B model for phones or a ~22-24B model ideal for 64GB laptops, akin to Mistral Small 3.1’s excellence, aligning with OpenClaw’s focus on scalable local AI assistants.